Artificial Intelligence has reached an interesting stage in its life. It can write passable essays, sketch out bits of code, summarise long reports and even help you plan a holiday. Impressive, yes. Dependable, not always.

Ask a standard language model to draft a poem and you’ll probably be delighted. Ask it to interpret a regulation, diagnose a complex issue or recommend a course of action, and that easy confidence starts to look a little worrying. The wording sounds polished, but you’re left wondering, “Did it actually think about that, or did it just sound convincing?”

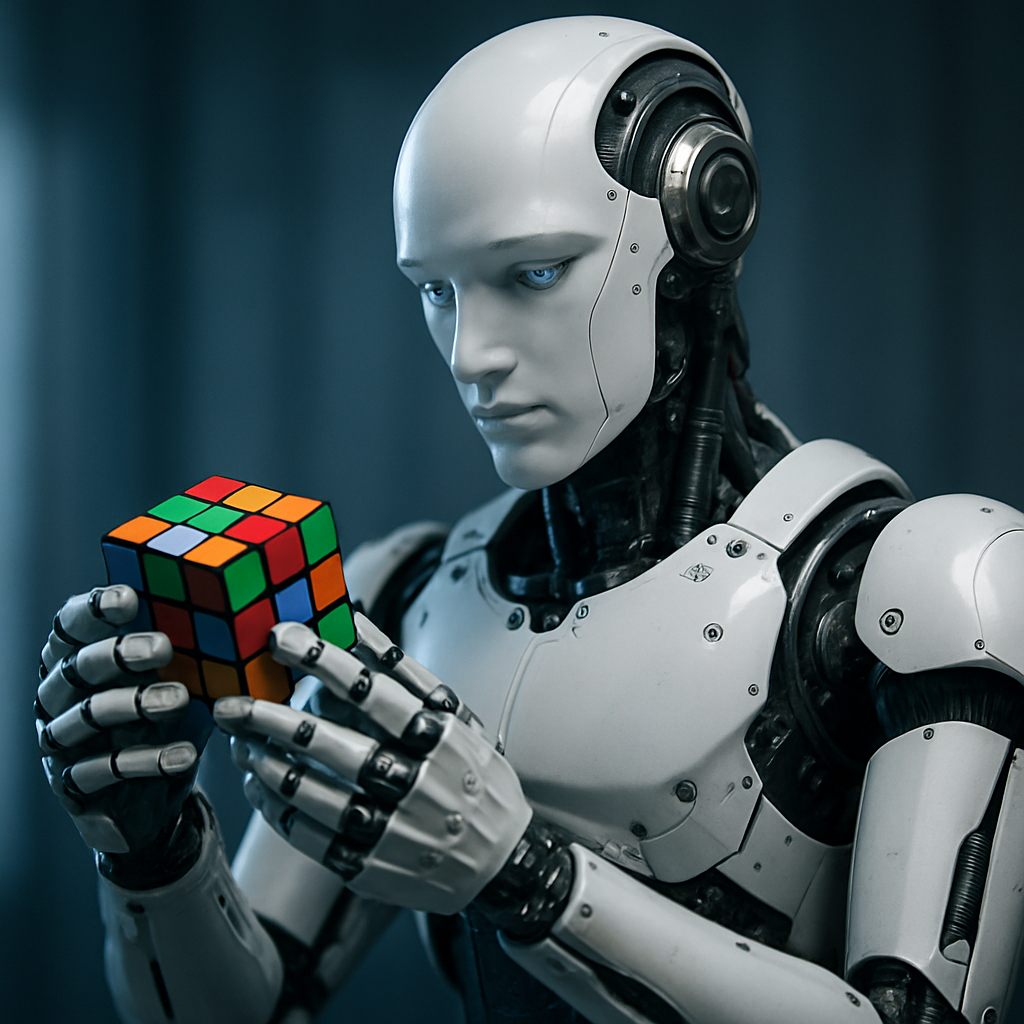

That question sits at the heart of a major shift in AI: the move towards reasoning-centric AI. Instead of treating models as fancy autocomplete machines, we are starting to treat them as systems that should think things through, explain themselves and reach conclusions you can trust.

This piece explores what that shift means, why it matters, and where it might take us next.

1. From clever text to careful thinking

Modern large language models are brilliant pattern matchers. They look at the words you give them, compare those against patterns learned from mountains of text, then predict what is likely to come next. That is the core trick.

The problem is that prediction is not the same as reasoning.

A model can produce a confident answer that looks plausible yet is completely wrong. It might invent sources, misapply a rule or miscalculate a figure, while sounding every bit as certain as a seasoned expert. This is what people refer to as “hallucination”, although that word makes it sound more mystical than it is. It is simply pattern matching where genuine reasoning is required.

Reasoning-centric AI tries to close that gap. The emphasis shifts from “Does this look like something a person might say?” to “Is this the right conclusion, and can the system show how it arrived there?”

Instead of jumping straight to an answer, a reasoning-centred system:

- breaks the problem into steps

- works through each step explicitly

- checks its own work

- uses tools when needed

- and only then produces a final response

In other words, it behaves less like a parrot and more like a careful colleague.

2. What we actually mean by “reasoning-centric AI”

The phrase covers a family of ideas rather than a single piece of technology. At its core, a reasoning-centric system is one that is designed to solve problems through structured thinking, not just pattern repetition.

That usually involves a few qualities:

- Multi step logic

The system does not leap to the punchline. It moves through a series of intermediate steps that you can inspect. - Consistency and coherence

It avoids contradicting itself halfway through an argument and keeps track of constraints and assumptions. - Evidence-based decisions

Instead of bluffing, it looks things up, calls tools or checks data when needed. - Ability to explain itself

It can show its working, rather like a student who writes out their maths steps. - Awareness of limitations

When a question needs external data or a specific calculation, it knows to defer to a tool instead of guessing.

Think of it as the difference between a fluent storyteller and a careful analyst. Both can talk. Only one is consistently safe to trust with decisions that affect money, health, risk or law.

3. Why reasoning matters so much now

You might ask, “If the current models already do a decent job, why push harder on reasoning?” There are a few fairly blunt answers.

3.1 Trust and accountability

Most organisations are past the honeymoon phase with generative AI. They have seen the magic tricks, but also the mistakes. A model that occasionally invents a case law reference is charming in a demo and disastrous in a real legal opinion.

As AI moves into production environments, trust becomes non-negotiable. People want to know:

- Can we trace how this answer was produced?

- Does the logic hold up under scrutiny?

- Are we able to explain this decision to a regulator, a customer or a board?

Reasoning-centric AI is far better suited to that world.

3.2 The rise of agents

The next wave of AI is not simply about chatbots. It is about agents that act on your behalf: reading documents, updating systems, calling APIs, scheduling work, handling tasks end to end.

An agent that cannot reason is a liability. It may misjudge a step, send the wrong email, alter the wrong record or loop endlessly. An agent with sound reasoning can plan, adapt, double-check and recover when something goes sideways.

3.3 Hard problems demand more than fluent text

Scientific research, complex diagnostics, root-cause analysis, financial modelling, serious coding work: none of these are well served by pure pattern mimicry. They rely on stable truths, disciplined logic and careful argument.

Reasoning-centred systems are built with those use cases in mind.

3.4 Regulation and ethics

AI is moving into a world of audits, standards and oversight. Regulators are asking for transparency, safety assessments and clear evidence that AI is not fabricating critical decisions.

Models that can show how they reached a conclusion, step by step, sit on much firmer ground than those that simply produce a polished paragraph and call it a day.

4. How modern systems learn to reason

The interesting question is how we move from slick text generation to genuine reasoning behaviour. Several techniques work together here.

4.1 Chain-of-thought reasoning

Instead of asking the model for a direct answer, we ask it to think aloud.

For example, rather than replying “15 days”, the model might write:

- March has 31 days.

- From 14 March to 29 March is 15 days.

- So the answer is 15 days.

This “chain of thought” structure gives the model a scaffold. It nudges the system away from instant guesses and towards stepwise reasoning.

4.2 Tree-of-thought and alternative paths

Real world problems often have several possible routes to a solution. Tree-of-thought approaches encourage the model to explore different paths, compare them and discard weaker ones.

That is especially useful when a question is ambiguous or when there are multiple valid strategies, such as planning a project, negotiating constraints or exploring design options.

4.3 Graph-based reasoning

Some problems are best captured as networks rather than neat lists. Graph-based techniques let AI connect entities, relationships and constraints in a richer structure.

Imagine mapping out risk exposures across a group of companies, or the flow of data through a complex system. Seeing these as graphs makes reasoning more natural and robust.

4.4 Reason and act

A particularly powerful pattern is sometimes called “ReAct”: reason, act, reason again.

The model does not sit in a vacuum. It:

- Thinks about what is needed.

- Calls a tool, such as a search service, calculator, database query or API.

- Looks at the result.

- Updates its reasoning.

- Repeats until it has enough information to answer.

This mix of reflection and action makes the system much less likely to bluff.

4.5 Self-consistency and multiple attempts

Another helpful trick is simply to ask the model to solve the same problem several times, then compare the results. If three different chains of reasoning all converge on the same answer, confidence increases.

When they diverge, the system can analyse where they differ and correct itself.

4.6 Self-reflection

Modern systems can be asked to review their own work. “Are you sure about this? Where might you have gone wrong?” is a surprisingly effective prompt.

By encouraging the model to criticise its first answer, you often get a second pass that is more accurate and better structured.

4.7 Tool use as part of reasoning

Perhaps the most important change is teaching models when not to rely on their internal statistics. If a question calls for a precise calculation, up-to-date information or database lookup, guessing is not acceptable.

Reasoning-centric systems are trained to hand work off to calculators, search engines, knowledge graphs and other tools, then incorporate those results into their reasoning.

4.8 Memory and long-running tasks

Many useful tasks unfold over hours or even days. A model with a short memory is like a colleague who forgets what you told them ten minutes ago.

Newer architectures use extended context windows, vector stores or external memory to preserve earlier steps, decisions and references. That allows more coherent long-form reasoning and reduces the tendency to contradict previous conclusions.

5. Agents: reasoning in motion

Once you have models that can think more clearly, the natural next step is to let them act. That is where AI agents come in.

A capable agent can:

- understand a goal

- design a plan

- break it into tasks

- choose and call tools

- integrate outputs

- monitor progress

- adapt when something unexpected happens

Here are a few examples.

5.1 Research companions

An agent might be tasked with “Summarise the implications of this new regulation for our retail business.” It could then:

- read the full text of the regulation

- identify the sections relevant to retail

- cross-reference existing policies

- highlight gaps

- produce a briefing for leaders

- suggest follow-up questions

Without strong reasoning, that job is simply not safe to automate.

5.2 Content and communications pipelines

Imagine a content supply chain where an agent handles the bulk of the legwork. It could:

- gather source material

- draft a piece

- check brand voice

- run compliance checks

- propose variations for different channels

- and prepare assets for publication

Reasoning is what allows it to respect tone of voice, policy constraints and audience needs rather than just churning out text.

5.3 Enterprise workflow helpers

Agents can be wired into CRMs, ticketing systems, finance tools and more. With reasoning capabilities, they can spot anomalies, propose actions, populate fields carefully and escalate issues rather than silently masking errors.

5.4 Coding assistants that do more than autocomplete

We already have assistants that suggest lines of code. The next step is agents that design a solution, weigh up trade-offs, write the code, run tests, interpret failures and iterate. None of that is possible without structured reasoning.

6. Where reasoning-centric AI is already changing the game

Although the field is young, several sectors are moving quickly.

6.1 Financial services

Banks and insurers care deeply about accuracy and auditability. Here, reasoning-centric AI supports:

- regulatory interpretation

- policy checking

- risk scoring

- fraud pattern analysis

- documentation for audits

The ability to keep a clear trail of “why we concluded this” is invaluable.

6.2 Healthcare

In clinical settings, suggestions must be grounded, transparent and cautious. AI that can explain its reasoning is far better suited to:

- structured symptom assessment

- guideline checking

- triage support

- treatment pathway comparison

The aim is always to support clinicians, not to replace them, but the quality of that support depends heavily on the quality of reasoning.

6.3 Legal and compliance

Law firms and in-house legal teams are experimenting with reasoning-aware systems for:

- contract review

- case comparison

- drafting arguments

- policy analysis

A model that can cite its sources, lay out the logic and note areas of uncertainty is much more useful than one that simply offers a polished paragraph.

6.4 Retail, marketing and customer experience

Even in more creative arenas, reasoning matters.

Agents can:

- build customer segments based on real data rather than hunches

- design experiments to test offers

- analyse campaign performance

- reason about stock levels, promotions and pricing strategies

You still get creativity, but it is grounded in logic and evidence.

6.5 Engineering and operations

From root-cause analysis in complex systems to planning maintenance schedules, reasoning-centred models provide structure, not just suggestions. They can connect symptoms to potential causes, weigh up different interventions and document the thinking behind a recommendation.

7. The snags and trade-offs

Of course, this new breed of AI is not magic. It brings its own challenges.

- Cost and speed

Multi step reasoning takes more computation and time than a single quick reply. There is a balance to strike between depth and responsiveness. - Data and training complexity

Teaching models to reason well often requires carefully curated examples of good reasoning. Those are harder to obtain than simple text pairs. - Messy reasoning

Asking a model to “show its working” does not guarantee the working is correct. Chains of thought can be wrong or misleading, so they need verification. - Safety and misuse

Better reasoning can be used for harmful ends if safeguards are weak. Systems still need guardrails, human oversight and robust governance. - Verification remains a challenge

Checking that an argument is sound is itself a non-trivial task. Tooling and methods for automated verification are improving but are not perfect yet.

8. Looking ahead: where reasoning could take AI next

Despite those hurdles, the direction of travel feels clear. Several strands are worth watching.

8.1 Hybrid systems

We are likely to see more neuro-symbolic approaches, where intuitive pattern recognition from neural networks is combined with explicit logic engines, rule systems and constraint solvers. That blend promises the best of both worlds: flexibility and precision.

8.2 Verifiable reasoning

Rather than taking the model’s word for it, future systems will generate reasoning that can be checked automatically. Think of proof trees, argument graphs and structured rationales that downstream tools can analyse.

8.3 Richer cognitive architectures

Instead of a single, monolithic model, we will see collections of specialised components: memory, planning, world modelling, self-monitoring and more, all working together. That starts to look less like a chatbot and more like a cognitive system.

8.4 Self-improving agents

Agents will log their own mistakes, learn from them and refine their strategies over time. Feedback loops that close the gap between “how we reasoned” and “what actually happened” will drive continual improvement.

8.5 Multimodal reasoning

Reasoning will not be limited to text. Systems will reason across images, audio, video and structured data, pulling everything together into a coherent analysis.

8.6 Teaching and coaching

Perhaps one of the most intriguing applications is AI that can teach. A model that reasons well and explains clearly can become an excellent tutor, coach or mentor, adaptable to different learning styles and levels.

9. What organisations can do today

You do not need to wait for a science-fiction future to get value from reasoning-centric AI. There are practical steps you can take now.

- Identify where reasoning matters

Look for workflows where mistakes are costly, regulation is tight or explanations are crucial. Those are prime candidates. - Start with decision support, not full automation

Use reasoning-aware systems to support experts, not replace them. Let humans stay in the loop while you build trust and understanding. - Invest in governance and audit trails

Make sure AI outputs, reasoning steps and tool calls are logged and reviewable. That will pay off when questions arise. - Design agentic workflows carefully

Give agents clear scopes, boundaries and escalation paths. Reasoning helps, but no system should have free rein across critical infrastructure. - Help your people work with AI, not around it

Train teams to ask better questions, read reasoning traces and challenge outputs. A thoughtful human paired with a thoughtful AI is a powerful combination.

10. A quieter, smarter revolution

The first wave of generative AI impressed people with flashy demos. The next wave will be quieter but far more important. It is the shift from sounding clever to being careful and clear.

Reasoning-centric AI is not about making systems chatty. It is about making them trustworthy.

It turns models into partners you can work with, not just tools you prod for a quick answer.

As this technology matures, the organisations that benefit most will be those that treat AI less as a novelty and more as a thinking companion: inspected, guided and woven thoughtfully into the way work gets done.

If AI is going to sit beside you on real decisions, it needs to do more than fill a page with words. It needs to think.

Leave a Reply